Finding Range Through Compute Efficiency

Higher levels of driving automation and increasing layers of software are creating energy-gobbling EVs.

This past January, I was among tens of thousands who returned to Las Vegas for CES—the show’s reawakening after the pandemic. Some definite themes emerged from the dozens of conversations I had over four days. Particularly prominent among those was a renewed focus on computing power efficiency in the vehicle.

We’re currently amid multiple transitions in the auto industry: electrification, more automation, and the rise of software-defined vehicles. Vehicle developers are finding that achieving higher levels of driving automation, within increasing layers of software, is creating greater energy demand in EVs.

Creating the software-defined vehicle and enabling features to be added via over-the-air updates requires new electrical/electronic (E/E) architectures. With these come a nearly-industry-wide adoption of more centralized compute platforms with more powerful system-on-a-chip (SoC) processors like the Nvidia Orin and Qualcomm Snapdragon Ride. Centralized compute is replacing the dozens of low-power microcontrollers that have been used in vehicles for decades.

These SoCs offer vastly higher performance. For example, Chinese EV startup Nio has a compute platform in its ET7 sedan dubbed Adam which uses four Orins for a combined 1,000 trillion operations per second (TOPS). This can run advanced automated driving capabilities and a variety of other functions. However, it also consumes over 800W of power—which is why such systems are only used on electrified vehicles. Traditional 12V internal combustion electrical systems max out at about 2.5kW of electrical power making such a system unfeasible.

A more extreme example comes from GM’s automated driving subsidiary Cruise, where the current compute platform in its Chevrolet Bolt-based robotaxi eats up to 4kW. Over a 10-hour shift, the compute in that robotaxi would gobble 40 kWh out of the 65kWh available in the Bolt, leaving the vehicle with a potential maximum range of about 80 miles (129 km).

Chips like Orin and those used by Cruise and others are very much general-purpose chips that are extremely flexible and can be used for broad range of applications. However, they also have a lot of capabilities not needed in the automotive space resulting in wasted energy.

A new generation of SoCs coming from companies like Qualcomm, Ambarella and Recogni are more optimized for automotive use cases, Those focus on the types of processing cores needed to handle in-vehicle tasks such as image processing for driver assist. In live demonstrations during CES, both Ambarella and Recogni showed their chips processing automotive data side by side with Orin systems and providing higher throughput at a fraction of the power consumption. Cruise is also developing its own optimized processors that will reduce power consumption by over 75% for next-generation vehicles, the company claims.

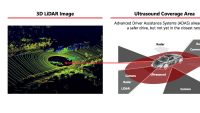

With new generation vehicles now coming to market higher resolution cameras that jump from 1.2MP to as much as 8MP, imaging radar and lidar, the amount of data that needs to be processed in real-time is exploding. At the same time, there is a push for more affordable EVs with longer ranges.

Simply using a brute force approach of adding more battery and more computing horsepower is not an acceptable option. Electric propulsion is already quite efficient, but every aspect of the vehicle must become thriftier with its use of electrons if all the competing goals are expected to be achieved.

Compute power in the vehicle becomes more important by the day. But it can’t consume all the energy needed for propulsion and climate control at an unlimited cost if the industry is to eventually replace all IC-engine vehicles.

Top Stories

NewsSensors/Data Acquisition

![]() Microvision Aquires Luminar, Plans Relationship Restoration, Multi-industry Push

Microvision Aquires Luminar, Plans Relationship Restoration, Multi-industry Push

INSIDERRF & Microwave Electronics

![]() A Next Generation Helmet System for Navy Pilots

A Next Generation Helmet System for Navy Pilots

INSIDERWeapons Systems

![]() New Raytheon and Lockheed Martin Agreements Expand Missile Defense Production

New Raytheon and Lockheed Martin Agreements Expand Missile Defense Production

NewsAutomotive

![]() Ford Announces 48-Volt Architecture for Future Electric Truck

Ford Announces 48-Volt Architecture for Future Electric Truck

INSIDERAerospace

![]() Active Strake System Cuts Cruise Drag, Boosts Flight Efficiency

Active Strake System Cuts Cruise Drag, Boosts Flight Efficiency

ArticlesTransportation

Webcasts

Aerospace

![]() Cooling a New Generation of Aerospace and Defense Embedded...

Cooling a New Generation of Aerospace and Defense Embedded...

Energy

![]() Battery Abuse Testing: Pushing to Failure

Battery Abuse Testing: Pushing to Failure

Power

![]() A FREE Two-Day Event Dedicated to Connected Mobility

A FREE Two-Day Event Dedicated to Connected Mobility

Automotive

![]() Quiet, Please: NVH Improvement Opportunities in the Early Design Cycle

Quiet, Please: NVH Improvement Opportunities in the Early Design Cycle

Electronics & Computers

![]() Advantages of Smart Power Distribution Unit Design for Automotive &...

Advantages of Smart Power Distribution Unit Design for Automotive &...

Unmanned Systems

![]() Sesame Solar's Nanogrid Tech Promises Major Gains in Drone Endurance

Sesame Solar's Nanogrid Tech Promises Major Gains in Drone Endurance