Lidar Vs. Everybody in the Onboard Sensor Race

Future systems will feature a reduced sensor array, but will still need a combination of sensor tech for safe performance.

Despite the industrywide realization that SAE driving automation Levels 4 and 5 are not imminent and instead long-term goals, development continues on the sensors that power current and future ADAS systems and up to Level 3. Nothing made it more clear that lidar was the industry favorite than the 30-plus companies showing versions of the tech at the 2023 Consumer Electronics Show. That’s an unstainable number, say industry experts. They see the next few years consisting of consolidation and many companies leaving the market. But despite lidar’s darling status, including Luminar’s prominent launch on the Volvo EX90, other companies are banking on their sensor technologies, from spherical radar antennas to improvements on current camera-vision systems.

Greg McGuire has an elevated view of what’s taking shape. As managing director of public-private partnership MCIty, the autonomous- and connected-car proving ground at the University of Michigan in Ann Arbor, he hasdozens of relationships with suppliers, OEMs and researchers. SAE Media spoke with him about the state of the sensor industry and where it is headed.

McGuire sees a future where the data from sensors is commoditized by the OEMs, so that the sensors could be used for multiple purposes. “The park assist sensor, if it was producing parking information before, now if they can get raw data from it, they could also use it for lane keeping or collision avoidance up close,” leading to fewer sensors, he said.

Lidar’s leaders

In a little more than a year, Luminar has emerged as a leader in automotive lidar. Showing its low-profile design that is already deployed or being included on vehicles from Volvo (EX 90), Polestar (3) and SAIC. Last month Mercedes and Luminar announced a long-term partnership to put the company’s lidar in “a broad range” of vehicles starting in 2025. The company projects that between 2026 and 2030, there will be 1 million Luminar-equipped vehicles on the road.

The Iris lidar, Luminar’s top product, combines an indium gallium arsenide sensor that the company says is the most sensitive in the industry, with the highest dynamic range, when paired with the company’s own ASIC. A lone 1550nm laser provides 1 million times the energy of a 905nm emitter “while remaining eye-safe,” the company says. Interestingly, at CES Luminar CEO Austin Russell announced that he wants to tread a path not normally taken by suppliers. “We have moved from horsepower to brain power, and from luxury to technology in the minds of consumers,” he said. While most automotive suppliers are happy to work as white label solutions, Russell wants Luminar to become a coveted brand among car shoppers.

Eitan Gertel, executive chairman of the board for Luminar competitor Opsys Technologies, says his company has produced the first truly solid-state lidar with no moving parts that is reliable and affordable for OEMs and suppliers. (There are other companies in the solid-state lidar space, some of whom claim to have developed the technology first.)

Opsys’ system consists of a central light detector with two emitters on either side. The system scans at 1,000 frames per second, and can see out to 300 meters (984 feet). Using a standard measure for lidar systems, it can identify an object with only 10% reflectivity at 150 meters. Compared to human vision, Opsys’ system is about 40 times faster at object detection.

Its lidar unit can also be placed behind a windshield or, potentially, in other places behind glass on a vehicle. Current lidar units have had trouble doing that due to reflectivity, resulting in the ubiquitous lidar hump. “Because ours has no moving parts, we can calibrate for any imperfections in the glass,” Gertel said. Regarding cost, lidar makers are reticent to talk about their prices, which vary according to volume and application. In February of 2022, SAE Media reported that OEMs expected to pay around $1,000 per unit for lidar. Gertel said Opsys’ system was significantly less than those expectations. MCity’s McGuire said that eventually, he expected scale and OEM demands to drive down the cost of sensors to around $100. Of course, part of the test of any supplier is the ability to manufacture at scale. To that end, China’s Innovusion delivered 50,000 of its lidar units to BEV maker Nio in 2022. A spokesperson said the company has the capacity to produce 300,000 units in 2023. It’s latest product, Falcon, has an exceptionally long range of 250m (820 feet) for 10% reflectivity and a maximum range of 500m (1600 feet), which is longer than many see as necessary.

Spherical radar for fewer placements

Arizona-based Lunewave is banking on its better-than-standard-radar system based on the spherical Luneberg antenna. The latest generation of their 3-D printed antenna is 25% smaller, with a radius of 30mm. “Our sensor solution is a really a patented process to design and mass produce these Luneberg antennas,” said CEO John Xin. He claimed that the company is the only one in the world “that has ability not to only design but also mass produce these.”

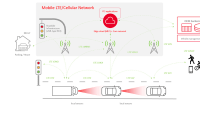

Xin says that standard radar as used in automotive is only effective in detecting within an arc of 15 to 20 degrees. That’s why automakers have, so far, compensated by placing as many as 20 to 30 sensors on vehicles. He says the Luneberg lens’ spherical construct “really means that we bring with a single radar, consistent high performance across a wide field of view, not just on the Azimuth or the XY plane field, but also vertical.

Xin said the company is collaborating with one German OEM to deliver their latest generation device in support of SAE Level 3 autonomous operation. “It’s an unprecedented 160 degrees in azimuth and 90 degrees in elevation with a single radar.” Xin said Lunewave sensors, even if more numerous on a given vehicle, come with lower signal processing needs. “Because the spherical antenna is made up of (unidirectional) receptors, the angle calculations are already done on the hardware side.” Xin said cost savings would come from needing far fewer sensors per vehicle. MCity’s McGuire is intrigued when looking at the technology, which operates at a relatively high 77GHz. “We’ve done some research with, you know, the older lower frequency radars,” which he said can make it tough to distinguish stationary objects from moving cars and the like. The higher frequency radar is really interesting because the discrimination is much better. This looks great.”

Software-driven stereo vision

Leif Jiang, CEO of Somerville, Mass.-based Nodar, said that binocular vision systems, such as the company’s Hammerhead (named after the shark’s widely-spaced eyes) could be a solution for automotive and commercial applications. Nodar is an acronym for “native optical distance and ranging.” A first comparison Jiang hears a lot about is to Subaru’s EyeSight safety system, comprising two cameras mounted near each other in the center of the windshield. “This is not ... two cameras close together,” he explained. “We can take these things, get rid of the heavy bar, throw it out, put these cameras on (real estate) the car gives you for free (such as side-view mirrors) and get this amazing wide baseline to really precisely triangulate the position of everything around you.”

Brad Upton, Nodar’s COO, said that a the width of a roofline, Hammerhead “can see up to a kilometer with fantastic precision.” What precision? Nodar says its software can deliver a point cloud (a dataset of spatial coordinates) up to 5 million pixels per frame, or at least five times greater than most lidar. And it can identify a 4-inch (10cm) brick at about 500 feet (152m). And the ability to handle that data in real time with signal processing — and not AI — is a big advantage, Upton said. “Even though there's quite a lot of hype around AI neural networks, right now, actually, signal processing is known. It's predictable. And it's very, very fast. We think for safety critical applications like this, that need to be real time, a signal processing approach is quite critical.”

Hammerhead itself is a software solution that can work with off-the-shelf cameras and GPUs such as Nvidia, Snapdragon and others. The software, while significantly more advanced, is similar to that which stabilizes images on modern phone cameras. “It can account for independent movement of the far-apart lenses,” Upton said. “It also gives us exquisite precision. We’ve been able to pick up a 10cm (4 inch) brick at 150m meters. And one of the unique things about our system is that we can also detect the road, because it helps in determining whether something is sitting above it.”

The Nodar execs know that their system would likely be used in combination with another.

What’s their ideal mix of sensors? Upton said it depends on the application. “ At (SAE Level 3), we think that there's a problem with the cost of lidar. And that we can fill that particular gap. And with combined with radar, that could be that could be a sufficient solution,” he said.

Thermal vision fills the gaps

Adasky, an Israeli company that makes thermal cameras, has no pretense of replacing other kinds of sensors. Instead, it frequently uses the term “enhance” to describe what it does for ADAS systems that have trouble in some scenarios. “Accident avoidance will never be good enough if nighttime or bad weather are considered outlier scenarios,” said Bill Grabowski, head of Adasky North America, during a nighttime on-street demo of the system in Las Vegas during CES. Adasky’s camera, which can hide in the palm of one hand, is passive, requiring no emitted light in order to detect objects like pedestrians, which it can identify at 328 feet (100m) with a 30-degree FOV, or 656 feet (200m) with an 18-degree FOV. The company is currently working with an American OEM that it is prevented from naming.

While the sensor market itself is seeing a lot of competition and OEMs have many viable combination options for ADAS and autonomous systems, MCity’s McGuire thinks the sensors themselves are reaching a point of competence that will shift the developmental push to processing power.

“The key thing for these companies is really more on having compute to be able to (drive AI and use the sensor-created data) and having a way to get new stuff onto the vehicle than it is on finding a new, super-incredible sensor,” he said.

Top Stories

NewsRF & Microwave Electronics

![]() Microvision Aquires Luminar, Plans Relationship Restoration, Multi-industry Push

Microvision Aquires Luminar, Plans Relationship Restoration, Multi-industry Push

INSIDERAerospace

![]() A Next Generation Helmet System for Navy Pilots

A Next Generation Helmet System for Navy Pilots

INSIDERDesign

![]() New Raytheon and Lockheed Martin Agreements Expand Missile Defense Production

New Raytheon and Lockheed Martin Agreements Expand Missile Defense Production

INSIDERMaterials

![]() How Airbus is Using w-DED to 3D Print Larger Titanium Airplane Parts

How Airbus is Using w-DED to 3D Print Larger Titanium Airplane Parts

NewsPower

![]() Ford Announces 48-Volt Architecture for Future Electric Truck

Ford Announces 48-Volt Architecture for Future Electric Truck

ArticlesAR/AI

Webcasts

Electronics & Computers

![]() Cooling a New Generation of Aerospace and Defense Embedded...

Cooling a New Generation of Aerospace and Defense Embedded...

Automotive

![]() Battery Abuse Testing: Pushing to Failure

Battery Abuse Testing: Pushing to Failure

Power

![]() A FREE Two-Day Event Dedicated to Connected Mobility

A FREE Two-Day Event Dedicated to Connected Mobility

Unmanned Systems

![]() Quiet, Please: NVH Improvement Opportunities in the Early Design Cycle

Quiet, Please: NVH Improvement Opportunities in the Early Design Cycle

Automotive

![]() Advantages of Smart Power Distribution Unit Design for Automotive &...

Advantages of Smart Power Distribution Unit Design for Automotive &...

Energy

![]() Sesame Solar's Nanogrid Tech Promises Major Gains in Drone Endurance

Sesame Solar's Nanogrid Tech Promises Major Gains in Drone Endurance