Developing Object Detection Systems for Autonomous Underwater Vehicles

The bottom of a lake or an ocean is an ever-changing place. Water flows back and forth in shifting currents. Sunlight heats the sand and darkness cools it back down. The sand itself moves, unveiling rocks and man-made objects of peculiar shape underneath.

Two key technologies—sonar and autonomous underwater vehicles (AUVs)— have provided us with relatively ubiquitous access to this complex and remote landscape. Commercial and academic participants are now figuring out how to apply advanced computer vision technology to undersea surveying. When these vision systems are based on biologically inspired deep neural networks, they are commonly considered a form of artificial intelligence (AI).

Object Detection and Classification

For ground and air vehicles, computer vision technologies are now widely used to identify and classify objects from video. Identification and classification promise two key benefits for undersea exploration. First, they promise to automate the analysis of the seafloor. Current workflows rely on AUVs taking sonar data and physically transporting it back to the surface for expert analysis. Automated object detection will allow experts to save hours of time and focus mainly on validating an AI’s findings.

Second, object detection should enable greater autonomy for AUVs, which are largely preprogrammed. Future AUVs will be expected to not just collect seafloor images but to perceive what those images mean and take immediate action, such as moving closer to an interesting object for reinspection.

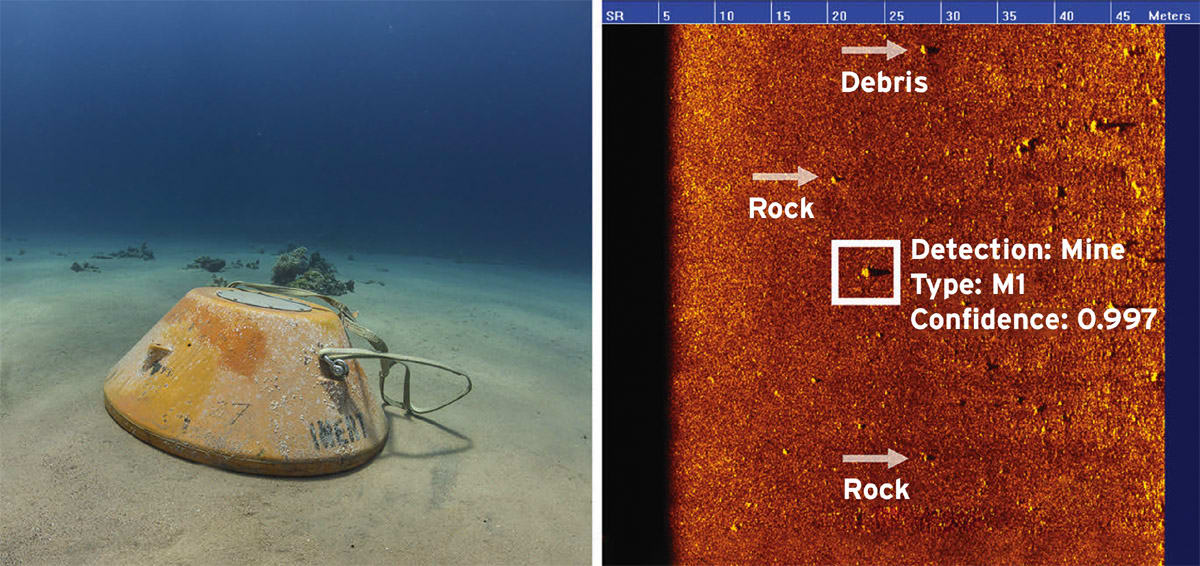

The development of object detection for undersea applications has lagged behind those for air and land, but it is catching up. Charles River Analytics recently released an object detection software product called AutoTRap Onboard™, which joins a select field of undersea object detection solutions. The current version of AutoTRap Onboard was developed in partnership with Teledyne Gavia and has been tailored towards use on their Gavia AUVs. It has been shown in sea tests to detect and classify certain types of mine-like objects with a high degree of accuracy, and to do so in real time. Importantly, the software platform is readily extensible to detect other objects and to run on other platforms and sonar systems. (The current release version is optimized for side scan sonar systems manufactured by EdgeTech.)

Modular AUV components like AutoTRap Onboard are a relatively recent development, as are AUVs themselves. Ocean science and industry stakeholders began to widely adopt commercially available AUVs in the early-to-mid 2000s, according to Arjuna Balasuriya, Principal Scientist at Charles River and product lead for AutoTRap Onboard. AUVs emerged as a viable technology at that time due to advances in onboard computer processing power and battery lifetime.

Because of their size and portability, AUVs lend themselves to modularization and componentization of their separate sonar, robotics, and software functions. AUV manufacturers such as Teledyne Gavia design their systems to support that modularity. Technology components such as AutoTRap Onboard can be integrated from different, specialized vendors at lower costs than building a single monolithic solution.

Defense and Commercial Applications

In the defense community, seafloor object detection tools are sought after primarily for mine countermeasures operations. Undersea mines have long played a critical role in marine warfare and can pose a major threat to naval personnel around the world. In the mine hunting context, object detection is commonly referred to as automatic target recognition (ATR).

Large-scale ATR systems have been available much longer than AUV-based ATR solutions, according to Balasuriya. They tend to use ship- or submarine-towed sonar and rely on a monolithic design and integration of sonar, software, and ship. This historical class of large-scale ATR solutions has typically required nation-state level funding to develop and acquire. The more recent generation of modular and affordable AUV-based components can be seen as a complement to existing large-scale ATR systems.

Outside of defense, different users need computer vision tools to detect and analyze a broad range of objects: pieces of airplane wreckage (for search and rescue), lost shipping containers (for shipping insurance claim verification), and a variety of offshore oil, gas, and wind infrastructure components, which require regular evaluation and maintenance. For these communities, AUVs and other marine robotics now deliver computer vision capabilities that were formerly only available for defense applications.

The development of object detection technology for the undersea domain has posed unique difficulties. Air is easy to see through with optical and infrared cameras, which have become extraordinarily widespread and affordable. In contrast, water absorbs optical light within a few meters. For that reason, sonar technology has long been the tool of choice for “seeing” through water and for imaging the seafloor.

Sonar Technology

Current generation AUVs come equipped with different types of modular sonar devices, including side scan sonar, forward looking sonar, and synthetic aperture sonar (SAS). Both high-frequency and low-frequency systems are available, providing relatively higher or lower spatial resolution, respectively. Centimeter resolution is readily achievable for SAS. Side scan systems are lower resolution but more widely developed.

Sonar imagery introduces new complications to object detection. An engineer might expect to directly employ existing convolutional neural networks (CNN) technology on sonar imagery, especially as sonar images can look roughly similar to aerial photography of the ground. However, CNNs must be trained on mass quantities of imagery to detect objects of interest, where different images show the objects at different angles, lighting conditions, and so on. Since sonar data is much less abundant, the training and development of accurate networks requires more customization, according to Camille Monnier, Principal Scientist at Charles River and lead computer vision developer for AutoTRap Onboard.

Networks also face qualitative differences for sonar detection, such as the unique behavior of sonar shadowing. Consider a typical sonar imaging workflow. A surveying AUV sweeps over the seafloor at a controlled 5 meters in altitude, emitting acoustic pulses and receiving reflected signals in return. For an AUV using side scan sonar, the resultant picture stretches out 50 meters either side of the vehicle’s track. Objects such as rocks on a sandy seafloor are indicated by bright spots in the image. Each rock blocks acoustic waves from hitting the region behind it, creating a shadow. A rock 50 meters away from the track casts a shadow much longer than a rock 5 meters away.

Acoustic shadowing gives the same types of objects drastically different appearances in different parts of a sonar image. These differences complicate the success of object detection systems. However, the unique shadowing of an object also provides information on its shape. AutoTRap Onboard processes this shadowing to increase the robustness and accuracy of detection.

Creating the underlying software for AutoTRap Onboard has at times involved just as much work as the detection model. Sonar remains a relatively niche technology that lacks the same level of standardization as digital optical photography. In fact, it was the standardization of camera hardware interfaces that (partially) enabled the rapid development of optical deep learning software in the early 2010s, according to Andrey Ost, Senior Software Engineer at Charles River and lead software developer for AutoTRap Onboard. By contrast, standardization for sonar data can be nonexistent, and software solutions must each pave their own way through the data. Sonar systems can also lack output APIs. AutoTRap Onboard reads the bytes directly off of raw sonar output files.

Ost recently redesigned the data processing pipeline for AutoTRap Onboard to be more swappable to different sonar systems with different data interfaces. Also, the product now constructs its own sonar imagery from raw sonar data, based on standard assumptions. These changes have addressed and anticipated some of the basic software engineering issues around sonar.

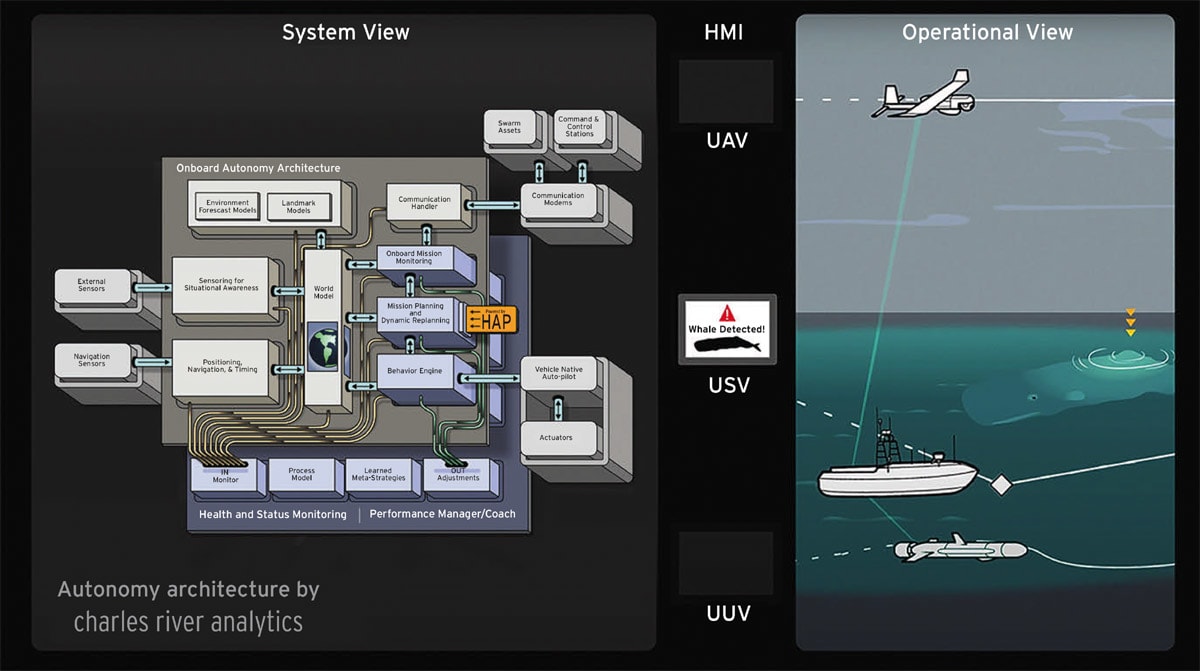

Advances will be needed in many areas in addition to software engineering and computer vision for AUVs to reach a truly autonomous state. Scientists and engineers at a number of companies are working on many of these associated autonomy technologies, including integration between computer vision and navigation, dynamic adaptation for changing environments, cooperation between autonomous vehicles, and explainable and robust AI.

All these technologies must come together to simplify and advance the understanding of the seafloor. But computer vision and object detection will play a key role. Using them, AUVs will look to the bottom, where the sands move, the seasons change, and vegetation grows only to recede once again. They will find what is there, the good things and the bad. They will make the depths known.

This article was written by Mordechai Rorvig, Science Writer, Charles River Analytics (Cambridge, MA). For more information, visit here .

Top Stories

INSIDERMaterials

![]() How Airbus is Using w-DED to 3D Print Larger Titanium Airplane Parts

How Airbus is Using w-DED to 3D Print Larger Titanium Airplane Parts

INSIDERManned Systems

![]() FAA to Replace Aging Network of Ground-Based Radars

FAA to Replace Aging Network of Ground-Based Radars

NewsAR/AI

![]() CES 2026: Bosch is Ready to Bring AI to Your (Likely ICE-powered) Vehicle

CES 2026: Bosch is Ready to Bring AI to Your (Likely ICE-powered) Vehicle

NewsAR/AI

![]() Accelerating Down the Road to Autonomy

Accelerating Down the Road to Autonomy

INSIDERManufacturing & Prototyping

![]() Can This Self-Healing Composite Make Airplane and Spacecraft Components Last...

Can This Self-Healing Composite Make Airplane and Spacecraft Components Last...

EditorialTransportation

Webcasts

Energy

![]() Hydrogen Engines Are Heating Up for Heavy Duty

Hydrogen Engines Are Heating Up for Heavy Duty

Transportation

![]() Advantages of Smart Power Distribution Unit Design for Automotive...

Advantages of Smart Power Distribution Unit Design for Automotive...

Transportation

![]() Quiet, Please: NVH Improvement Opportunities in the Early Design...

Quiet, Please: NVH Improvement Opportunities in the Early Design...

Transportation

![]() A FREE Two-Day Event Dedicated to Connected Mobility

A FREE Two-Day Event Dedicated to Connected Mobility

Power

![]() E/E Architecture Redefined: Building Smarter, Safer, and Scalable Vehicles

E/E Architecture Redefined: Building Smarter, Safer, and Scalable Vehicles

Unmanned Systems

![]() How Sift's Unified Observability Platform Accelerates Drone Innovation

How Sift's Unified Observability Platform Accelerates Drone Innovation